20 minutes

Kubernetes - Building a Mixed Linux and Windows Cluster using Packer, Terraform, Ansible and KVM - Part 3: Running Applications

This post is the third and final part in a series on creating a Kubernetes cluster containing both Linux and Windows workers. The first post details building the virtual machine images ready to be configured as Control Plane or Worker nodes. The second post covers initializing the cluster using Terraform and Cloud-Init. This post is on how to deploy applications to the cluster, and how to make them available outside of the cluster.

Errata

In the last post, we looked at how to use Cloud-Init to initialize members of the cluster. When writing this post, I discovered that one of the lines was incorrect. If you tried to deploy applications on the Windows workers, they could only be reached using a service of type NodePort, and you knew the IP and port of the node the application runs on.

A single character change in the template for creating the first Control Plane node fixes this: -

Before

curl -L https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml | sed 's/vxlan",/vxlan",\n "VNI" : 4096,\n "Port": 4789/g' | kubectl apply -f -

After

curl -L https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml | sed 's/vxlan"/vxlan",\n "VNI" : 4096,\n "Port": 4789/g' | kubectl apply -f -

This is because the line vxlan in the kube-flannel.yml configuration does not have a comma at the end of it: -

[...]

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

[...]

Using the correct sed expression, this is changed to: -

[...]

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan",

"VNI": 4096,

"Port": 4789

}

}

[...]

Without this, the Kubernetes proxy (i.e. the application which forwards traffic to the correct node, regardless of which node the traffic ingresses first) is not able to communicate with the Windows nodes.

The original post has been updated to reflect this, but for anyone who has used it already, ensure you change your Cloud-Init template to match.

Tasks

The aim of his post is to run applications on the Linux and Windows workers and expose them outside of the cluster.

We need to make a distinction between applications that run internal to the cluster and those exposed externally. Not every application that runs in a Kubernetes cluster needs to be exposed to user traffic. Examples of this would be: -

- Monitoring agents (e.g. Prometheus exporters) when the monitoring application (e.g. Prometheus) runs inside the cluster

- Webhooks for interacting with/manipulating Kubernetes resources within the cluster (e.g. Dynamic Admission Control)

- A datastore/API that is only accessed by microservices/containers within the cluster

To achieve this we need to: -

- Be able to manage the cluster using

kubectl - Ensure that Linux applications run on the Linux workers, and Windows applications run on the Windows workers

- Setup an Ingress Controller

- An Ingress object in Kubernetes is what manages external access to a service within the cluster

- Setup a Kubernetes-native Load Balancer that exposes Kubernetes Services

- Specifically, Services that have a type of LoadBalancer

- Run some applications!

While it may seem like the Ingress and LoadBalancer steps are achieving the similar goals, there is an important distinction that needs to be made. A LoadBalancer may be able to expose a service, but if every service had an associated LoadBalancer you may run out of resources on your chosen Load Balancer. This could be IPs, services, resource limits, or even money to pay for the Load Balancers!

Ingress objects allow you to route different paths to different services, while sharing the same LoadBalancer endpoint. For example, you could route /important-api to the VeryImportantAPI Kubernetes Service, and the /not-as-important-api to the UnimportantAPI Kubernetes Service, while reusing the same Load Balancer resource.

In most cloud providers, there is a mechanism for automatically creating Load Balancers based upon Ingress objects and Services (e.g. the AWS ALB Ingress Controller). As we are running this on KVM though, this is not the case, so we need another way to achieve this.

Administering the cluster

There are multiple ways to control access to a Kubernetes cluster. For example, if you run a cluster on AWS, you can use the AWS IAM Authenticator. If you use the Red Hat distribution of Kubernetes (OpenShift), you can use many different methods including single-sign on via LDAP, Oauth2, and much more.

In this instance though, we will just use the admin.conf configuration file generated by the Control Plane. In a production scenario, I would recommend using a more robust method of authentication and authorization, but for lab purposes this is fine.

To use this configuration file, navigate to /etc/kubernetes/admin.conf on one of the Control Plane nodes, copy the contents, and place it in the default Kubernetes configuration location on your machine. For Linux and Mac, this is in the .kube directory in your home directory, named config (i.e. ~/.kube/config). On Windows, this would be the same, but using your %USERPROFILE% location (e.g. C:\Users\yetiops\.kube\config).

Download kubectl using these instructions and then you should be able to access the cluster: -

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-cp-01 Ready master 30h v1.19.3

k8s-cp-02 Ready master 30h v1.19.3

k8s-cp-03 Ready master 30h v1.19.3

k8s-winworker-0 Ready <none> 30h v1.19.3

k8s-worker-01 Ready <none> 30h v1.19.3

k8s-worker-02 Ready <none> 30h v1.19.3

k8s-worker-03 Ready <none> 30h v1.19.3

Ensure applications run on the correct workers

If you deploy applications to a cluster containing Linux and Windows workers, the Kubernetes control plane will try to schedule these applications on any worker available regardless of operating system. If you are attempting to run a Linux native application, using a Linux container image, you will have failures on scheduling Pods if it is scheduled on your Windows worker. The same is also true for a Windows container being scheduled on a Linux worker.

To avoid this behaviour, we can deploy a RuntimeClass and a Taint.

A RuntimeClass contains two main sections. The first is a NodeSelector. This refers to types of nodes (e.g. nodes running Windows on x86 64-bit) and their associated container runtime. Multiple different container runtimes exist like Docker on Linux, Docker on Windows, Kata Containers and more. Not only that, but workers in the same cluster may run different runtimes. Kubernetes therefore needs to know what workloads are valid for each node type and runtime.

Secondly, it contains a Toleration. What this says is that for nodes that match the Taint specified in this Toleration, any workload that has this RuntimeClass attached is able to run on them. If this RuntimeClass is not attached (or a Toleration applied directly) to the workload, then the Kubernetes control plane knows to schedule it on other workers.

The below shows the RuntimeClass we use in this cluster: -

apiVersion: node.k8s.io/v1beta1

kind: RuntimeClass

metadata:

name: windows-2019

handler: 'docker'

scheduling:

nodeSelector:

kubernetes.io/os: 'windows'

kubernetes.io/arch: 'amd64'

node.kubernetes.io/windows-build: '10.0.17763'

tolerations:

- effect: NoSchedule

key: OS

operator: Equal

value: "Windows"

This class specifies that: -

- The nodes in question must be running Windows, on the AMD64 (i.e. 64-bit x86) architecture

- They must have the Windows build

10.0.17763(the version number for Windows 2019 Server) - A toleration that allows it to run on “tainted” workers

In addition, we must also add a taint to the Windows workers themselves. A taint is a way of saying that workloads shouldn’t run on a certain node (or nodes). A toleration can then be used to say “This workload can run on nodes with these taints”. The taint that this RuntimeClass would match is applied like the below: -

$ kubectl taint nodes k8s-winworker-0 OS=Windows:NoSchedule

node/k8s-winworker-0 tainted

This worker is now “tainted”. Only workloads that tolerate this taint will be allowed to run on it (i.e. those that have our RuntimeClass attached). For any Windows-based workload, we just need to add RuntimeClass to it to ensure it runs on the correct nodes.

To attach the RuntimeClass to the Deployment, you can use something like the following: -

apiVersion: apps/v1

kind: Deployment

metadata:

name: iis-2019

labels:

app: iis-2019

spec:

replicas: 1

template:

metadata:

name: iis-2019

labels:

app: iis-2019

spec:

runtimeClassName: windows-2019

This Deployment can now “tolerate” the previously-specified taint, allowing it to deploy on the Windows workers. It also uses the nodeSelector for scheduling, looking for a 64-bit Windows 2019 Server based worker.

Apply the RuntimeClass

To apply the RuntimeClass, create a file that contains the RuntimeClass as specified above, and then apply with kubectl apply -f $NAME-OF-FILE.yaml: -

$ kubectl apply -f win2k19Class.yml

runtimeclass.node.k8s.io/windows-2019 created

You can check the RuntimeClass with the below: -

$ kubectl get runtimeclasses

NAME HANDLER AGE

windows-2019 docker 7s

$ kubectl describe runtimeclasses windows-2019

Name: windows-2019

Namespace:

Labels: <none>

Annotations: <none>

API Version: node.k8s.io/v1beta1

Handler: docker

Kind: RuntimeClass

Metadata:

Creation Timestamp: 2021-01-03T21:16:35Z

Managed Fields:

API Version: node.k8s.io/v1beta1

Fields Type: FieldsV1

fieldsV1:

f:handler:

f:metadata:

f:annotations:

.:

f:kubectl.kubernetes.io/last-applied-configuration:

f:scheduling:

.:

f:nodeSelector:

.:

f:kubernetes.io/arch:

f:kubernetes.io/os:

f:node.kubernetes.io/windows-build:

f:tolerations:

Manager: kubectl-client-side-apply

Operation: Update

Time: 2021-01-03T21:16:35Z

Resource Version: 26030

Self Link: /apis/node.k8s.io/v1beta1/runtimeclasses/windows-2019

UID: 0f70a952-3381-40bb-887e-27db0864c86f

Scheduling:

Node Selector:

kubernetes.io/arch: amd64

kubernetes.io/os: windows

node.kubernetes.io/windows-build: 10.0.17763

Tolerations:

Effect: NoSchedule

Key: os

Operator: Equal

Value: windows

Events: <none>

With this applied, Kubernetes will only attempt to schedule Pods on Windows nodes if they have the RuntimeClass. This does mean that if we applied the RuntimeClass to a Linux workload, Kubernetes would try to schedule it on the Windows nodes.

However this would mean someone (or some process) has applied this intentionally (or at least made an error in configuration), rather than the Kubernetes scheduler being unaware of where to run the workload.

Ingress

Now that we have ensured that workloads will run on the correct operating system, we can deploy an Ingress Controller. The purpose of this is to allow external access to workloads within the cluster.

A number of Ingress Controllers exist for Kubernetes, covering everything from Azure to F5 BIG-IP loadbalancers. For this cluster we are using the NGINX Ingress Controller, as it is widely used and supported, with excellent documentation. It also has no reliance on an external cloud provider or a commercial loadbalancer/application gateway.

Apply the Ingress Controller

The process to apply the Ingress Controller is a single command: -

$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/provider/baremetal/deploy.yaml

namespace/ingress-nginx created

serviceaccount/ingress-nginx created

configmap/ingress-nginx-controller created

clusterrole.rbac.authorization.k8s.io/ingress-nginx created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx created

role.rbac.authorization.k8s.io/ingress-nginx created

rolebinding.rbac.authorization.k8s.io/ingress-nginx created

service/ingress-nginx-controller-admission created

service/ingress-nginx-controller created

deployment.apps/ingress-nginx-controller created

validatingwebhookconfiguration.admissionregistration.k8s.io/ingress-nginx-admission created

serviceaccount/ingress-nginx-admission created

clusterrole.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

role.rbac.authorization.k8s.io/ingress-nginx-admission created

rolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

job.batch/ingress-nginx-admission-create created

job.batch/ingress-nginx-admission-patch created

This creates all the necessary resources to run the Ingress Controller. We can verify all of the objects using: -

$ kubectl get all -n ingress-nginx

NAME READY STATUS RESTARTS AGE

pod/ingress-nginx-admission-create-kmrv2 0/1 Completed 0 7m52s

pod/ingress-nginx-admission-patch-s4jwq 0/1 Completed 0 7m52s

pod/ingress-nginx-controller-96fb64b49-6x6jt 1/1 Running 4 7m52s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/ingress-nginx-controller LoadBalancer 10.110.160.21 <pending> 80:31293/TCP,443:31561/TCP 7m52s

service/ingress-nginx-controller-admission ClusterIP 10.103.99.180 <none> 443/TCP 7m52s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/ingress-nginx-controller 1/1 1 1 7m52s

NAME DESIRED CURRENT READY AGE

replicaset.apps/ingress-nginx-controller-96fb64b49 1 1 1 7m52s

NAME COMPLETIONS DURATION AGE

job.batch/ingress-nginx-admission-create 1/1 6s 7m52s

job.batch/ingress-nginx-admission-patch 1/1 7s 7m52s

At this stage, there is nothing else we need to do. Without a Load Balancer mechanism in place, we are yet unable to expose applications externally from the cluster. This is evident in that the service/ingress-nginx-controller Service is stuck in Pending on waiting for an external IP.

Load balancing

For traffic to be able to reach the cluster from outside, we can use a combination of Ingress and load balancing. Ingress is used to provide path-based routing (i.e. /app1 goes to the app1 Kubernetes service, /app2 goes to the app2 Kubernetes service). The load balancing mechanism is then used to provide the routing from the external network into the cluster.

A point to note here is that I am using external network to mean anything outside of the cluster, not necessarily exposing this to the public internet. This is still required even if you are only running in a lab scenario.

The reason for using load balancing is that the application could be available on any worker in the cluster. As of Kubernetes v1.20, it can support 5000 nodes, so having to remember the IP address of the node and port the application uses could be very cumbersome to say the least.

Most cloud providers provide load balancing functionality, meaning that so long as the cluster knows how to interact with the cloud provider, it can provision load balancers dynamically. As we are building this cluster on KVM, we do not have this luxury. Enter MetalLB.

MetalLB

MetalLB aims to make non-Cloud provisioned Kubernetes clusters first class citizens when it comes to bringing user traffic into the cluster. MetalLB can function in two ways: -

- Layer 2 Mode - Assign a pool of IP addresses (from a subnet the Kubernetes worker nodes are attached to) and MetalLB will announce itself as “owning” that IP (using ARP for IPv4 or NDP for IPv6).

- BGP Mode - Peer with a BGP speaking router and announce addresses to bring traffic into the cluster

Up until very recently, I spent nearly a decade in Network Engineering and Architecture, so BGP is something I am very familiar with. However, I do also appreciate that not everyone has this background so diving deep into running routing protocols is going to be beyond the scope of this post.

Because of this, I have chosen to use Layer 2 mode for this post for simplicity. I will probably cover using the BGP mode in a future post though!

Deploying MetalLB

To deploy MetalLB, we can follow the steps from the MetalLB documentation: -

$ kubectl apply -f https://raw.githubusercontent.com/metallb/metallb/v0.9.5/manifests/namespace.yaml

namespace/metallb-system created

$ kubectl apply -f https://raw.githubusercontent.com/metallb/metallb/v0.9.5/manifests/metallb.yaml

podsecuritypolicy.policy/controller created

podsecuritypolicy.policy/speaker created

serviceaccount/controller created

serviceaccount/speaker created

clusterrole.rbac.authorization.k8s.io/metallb-system:controller created

clusterrole.rbac.authorization.k8s.io/metallb-system:speaker created

role.rbac.authorization.k8s.io/config-watcher created

role.rbac.authorization.k8s.io/pod-lister created

clusterrolebinding.rbac.authorization.k8s.io/metallb-system:controller created

clusterrolebinding.rbac.authorization.k8s.io/metallb-system:speaker created

rolebinding.rbac.authorization.k8s.io/config-watcher created

rolebinding.rbac.authorization.k8s.io/pod-lister created

daemonset.apps/speaker created

deployment.apps/controller created

$ kubectl create secret generic -n metallb-system memberlist --from-literal=secretkey="$(openssl rand -base64 128)"

secret/memberlist created

$ kubectl get all -n metallb-system

NAME READY STATUS RESTARTS AGE

pod/controller-65db86ddc6-r9bhj 1/1 Running 0 7m58s

pod/speaker-7tqvf 1/1 Running 0 7m58s

pod/speaker-85jnx 1/1 Running 0 7m58s

pod/speaker-b8sbt 1/1 Running 0 7m58s

pod/speaker-fvzlm 1/1 Running 0 7m58s

pod/speaker-qx8l4 1/1 Running 0 7m58s

pod/speaker-ws4jn 1/1 Running 0 7m58s

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

daemonset.apps/speaker 6 6 6 6 6 kubernetes.io/os=linux 7m58s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/controller 1/1 1 1 7m58s

NAME DESIRED CURRENT READY AGE

replicaset.apps/controller-65db86ddc6 1 1 1 7m58s

This deploys all the Pods, service accounts, roles, namespaces and more. MetalLB will stay idle for now, until it receives it’s configuration (which we will cover in the next step): -

$ kubectl logs -n metallb-system controller-65db86ddc6-r9bhj | grep -i config | jq

[...]

{

"caller": "main.go:62",

"event": "noConfig",

"msg": "not processing, still waiting for config",

"service": "ingress-nginx/ingress-nginx-controller",

"ts": "2021-01-08T20:12:39.495793513Z"

}

[...]

Configuring MetalLB

To configure MetalLB, we use steps similar to the MetalLB Configuration Documentation. This will define the mode (Layer 2 or BGP), and also the IP range we will use to serve traffic on.

I am using the 10.15.32.0/24 subnet for my KVM lab, and have chosen a subset of this range for MetalLB to use for load balanced services: -

apiVersion: v1

kind: ConfigMap

metadata:

namespace: metallb-system

name: config

data:

config: |

address-pools:

- name: default

protocol: layer2

addresses:

- 10.15.32.200-10.15.32.210

The protocol defines the mode (in this case, layer2).

Now, whenever we use the type: LoadBalancer within a Kubernetes Service, MetalLB will use one of the IPs from 10.15.32.200 to 10.15.32.210 and will respond to Address Resolution Protocol (ARP) requests for the IP. As long as this range is reachable on your network, Kubernetes Services will now be available.

To deploy the ConfigMap, add something like the above to a file (changing to suit your network/subnetting structure), and then deploy like so: -

$ kubectl apply -f configmap.yaml

configmap/config created

We should now see some activity in the logs: -

$ kubectl logs -n metallb-system controller-65db86ddc6-r9bhj | grep -i config | jq

[...]

{

"caller": "main.go:108",

"configmap": "metallb-system/config",

"event": "startUpdate",

"msg": "start of config update",

"ts": "2021-01-08T20:23:17.644609367Z"

}

{

"caller": "main.go:121",

"configmap": "metallb-system/config",

"event": "endUpdate",

"msg": "end of config update",

"ts": "2021-01-08T20:23:17.644842185Z"

}

{

"caller": "k8s.go:402",

"configmap": "metallb-system/config",

"event": "configLoaded",

"msg": "config (re)loaded",

"ts": "2021-01-08T20:23:17.644924109Z"

}

{

"caller": "main.go:49",

"event": "startUpdate",

"msg": "start of service update",

"service": "ingress-nginx/ingress-nginx-controller",

"ts": "2021-01-08T20:23:17.650177469Z"

}

{

"caller": "service.go:114",

"event": "ipAllocated",

"ip": "10.15.32.200",

"msg": "IP address assigned by controller",

"service": "ingress-nginx/ingress-nginx-controller",

"ts": "2021-01-08T20:23:17.650293618Z"

}

{

"caller": "main.go:96",

"event": "serviceUpdated",

"msg": "updated service object",

"service": "ingress-nginx/ingress-nginx-controller",

"ts": "2021-01-08T20:23:17.674400824Z"

}

[...]

As you can see, one of the services has already had an IP address allocated. This is the NGINX Ingress Controller, which is now exposing itself on the IP 10.15.32.200: -

$ kubectl get service -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 10.110.160.21 10.15.32.200 80:31293/TCP,443:31561/TCP 4d22h

ingress-nginx-controller-admission ClusterIP 10.103.99.180 <none> 443/TCP 4d22h

$ curl 10.15.32.200

<html>

<head><title>404 Not Found</title></head>

<body>

<center><h1>404 Not Found</h1></center>

<hr><center>nginx</center>

</body>

</html>

While the page returned is a 404 Not Found error page, this is being presented by the NGINX Ingress Controller. This means that Services are now available outside of the cluster. Now we can start running some applications!

Running applications

Linux

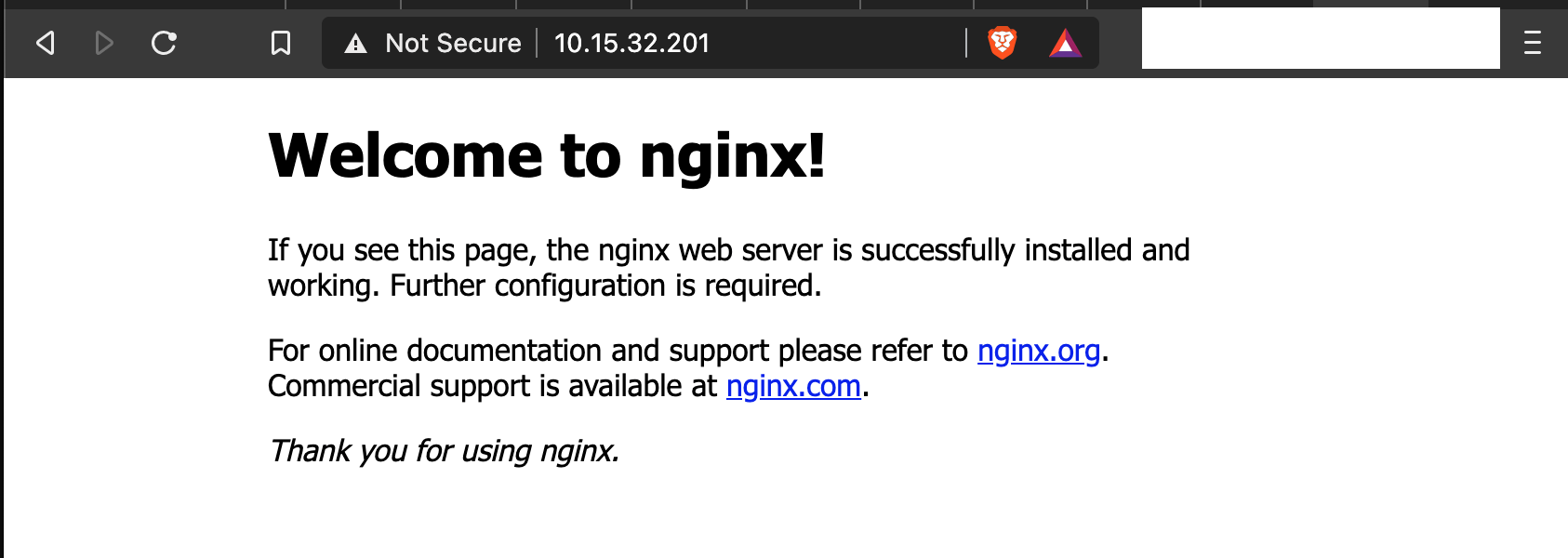

To test running a Linux workload, we will use a generic NGINX deployment, with a Service of type: LoadBalancer, meaning that traffic will be forwarded directly from the LoadBalancer to the application (no ingress involved).

The Deployment and Service are defined like so: -

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx

spec:

type: LoadBalancer

ports:

- protocol: TCP

port: 80

selector:

app: nginx

The Deployment will run three NGINX containers, and the containers will listen on TCP port 80. The Service matches the NGINX application (using the selector), and exposes it on a new IP from the range we assigned to MetalLB: -

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-66b6c48dd5-7qp64 1/1 Running 0 13m

nginx-deployment-66b6c48dd5-cbtwt 1/1 Running 0 13m

nginx-deployment-66b6c48dd5-qfd52 1/1 Running 0 13m

$ kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 13d

nginx LoadBalancer 10.102.182.166 10.15.32.201 80:30041/TCP 14m

If we then browse to this IP address, we should get the default NGINX landing page: -

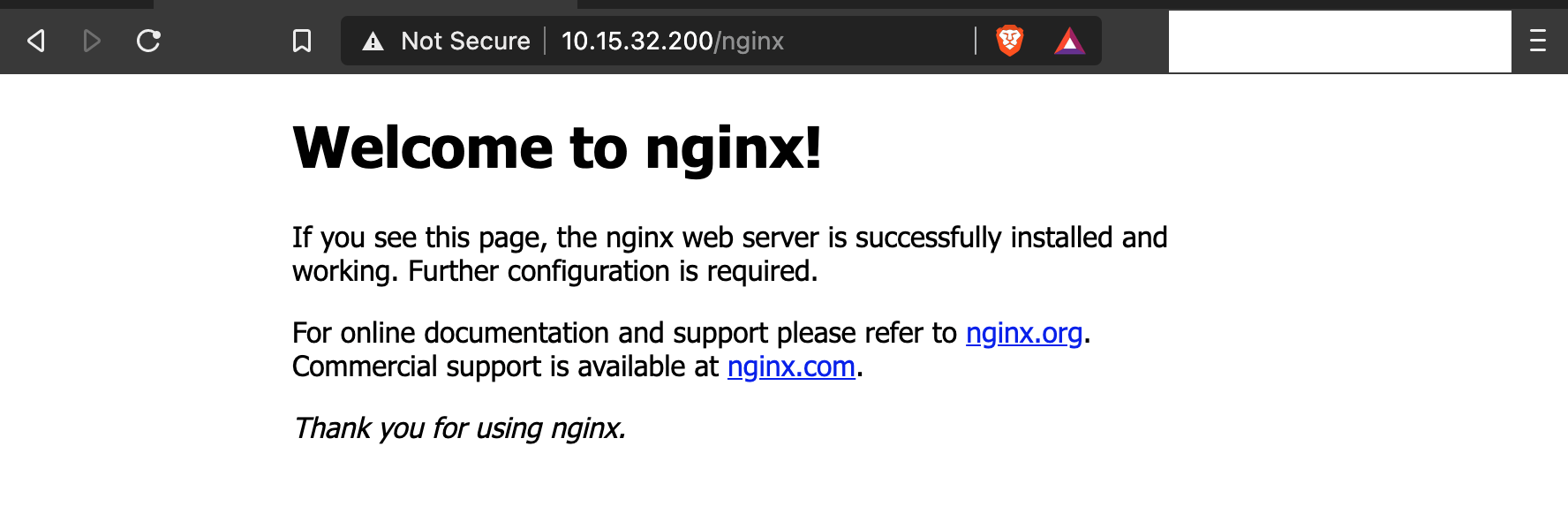

Linux using Ingress

While the above method works well, we will run out of available IP addresses very quickly if every service gets it’s own IP address. This is where we can use the Ingress method, which allows us to do path-based routing for traffic: -

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx

spec:

type: NodePort

ports:

- protocol: TCP

port: 80

selector:

app: nginx

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name : nginx

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- http:

paths:

- path: /nginx

pathType: Prefix

backend:

service:

name: nginx

port:

number: 80

To explain the above configuration, we expose the Service using type: NodePort. Rather than exposing via MetalLB directly, we add an Ingress object which matches the service. This object says that if we target the IP of the NGINX Ingress Controller (10.15.32.200) and use the path /nginx, traffic will be forwarded to the NGINX service we have created. We can check the status of the Ingress object with: -

$ kubectl get ingresses

NAME CLASS HOSTS ADDRESS PORTS AGE

nginx <none> * 10.15.32.20 80 9m19s

$ kubectl describe ingresses nginx

Name: nginx

Namespace: default

Address: 10.15.32.20

Default backend: default-http-backend:80 (<error: endpoints "default-http-backend" not found>)

Rules:

Host Path Backends

---- ---- --------

*

/nginx nginx:80 10.244.2.9:80,10.244.3.12:80,10.244.4.14:80)

Annotations: nginx.ingress.kubernetes.io/rewrite-target: /

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal CREATE 9m23s nginx-ingress-controller Ingress default/nginx

Normal UPDATE 9m19s nginx-ingress-controller Ingress default/nginx

As we can see, we have our Ingress object, as well as the paths for that object too.

We can now verify that path-based routing works with the NGINX Ingress Controller: -

It works. Now to deploy a Windows application!

Windows

In this section, we will deploy an IIS container on Windows so that it exposes a basic web server (as we did with NGINX on the Linux workers).

One point to note is that I have yet to be able to make MetalLB work directly with Windows nodes. The underlying functionality of MetalLB specifies that it should attract the traffic via the Linux MetalLB pods, and then forward to the Windows workers via the Flannel networking we configured in the previous parts of this series. This does not seem to work in my lab, nor have I seen any other articles or documentation which demonstrates this working correctly.

Instead we shall make use of the NGINX Ingress Controller, and forward to the IIS container using path-based routing. We define a Deployment, Service and Ingress object like in the previous section: -

apiVersion: apps/v1

kind: Deployment

metadata:

name: iis-2019

labels:

app: iis-2019

spec:

replicas: 1

template:

metadata:

name: iis-2019

labels:

app: iis-2019

spec:

runtimeClassName: windows-2019

containers:

- name: iis

image: mcr.microsoft.com/windows/servercore/iis:windowsservercore-ltsc2019

ports:

- containerPort: 80

resources:

limits:

cpu: 1

memory: 800Mi

requests:

cpu: .1

memory: 300Mi

selector:

matchLabels:

app: iis-2019

---

apiVersion: v1

kind: Service

metadata:

name: iis

spec:

type: NodePort

ports:

- protocol: TCP

port: 80

selector:

app: iis-2019

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name : iis

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- http:

paths:

- path: /iis

pathType: Prefix

backend:

service:

name: iis

port:

number: 80

This is very similar to the previous section. The only major difference (other than specifying the IIS image and names rather than NGINX) is the runtimeClassName. If you recall from earlier in this post, this is required to be able to tolerate the taint on the Windows nodes that stops Linux workloads from attempting to schedule on Windows. Without this, the container is unable to schedule on the Windows worker.

After applying this, we should see the following: -

$ kubectl get pod

NAME READY STATUS RESTARTS AGE

iis-2019-c7b8f4cdb-q6cmf 1/1 Running 0 15m

nginx-deployment-66b6c48dd5-5xpr6 1/1 Running 0 38m

nginx-deployment-66b6c48dd5-dx4nv 1/1 Running 0 38m

nginx-deployment-66b6c48dd5-rfxqj 1/1 Running 0 38m

$ kubectl get service iis

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

iis NodePort 10.100.120.68 <none> 80:30208/TCP 16m

$ kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

iis <none> * 10.15.32.20 80 14m

nginx <none> * 10.15.32.20 80 37m

$ kubectl describe ingresses iis

Warning: extensions/v1beta1 Ingress is deprecated in v1.14+, unavailable in v1.22+; use networking.k8s.io/v1 Ingress

Name: iis

Namespace: default

Address: 10.15.32.20

Default backend: default-http-backend:80 (<error: endpoints "default-http-backend" not found>)

Rules:

Host Path Backends

---- ---- --------

*

/iis iis:80 10.244.1.14:80)

Annotations: nginx.ingress.kubernetes.io/rewrite-target: /

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal CREATE 14m nginx-ingress-controller Ingress default/iis

Normal UPDATE 13m nginx-ingress-controller Ingress default/iis

Now if we navigate to the 10.15.32.200 IP using the path IIS, we should see the IIS landing page: -

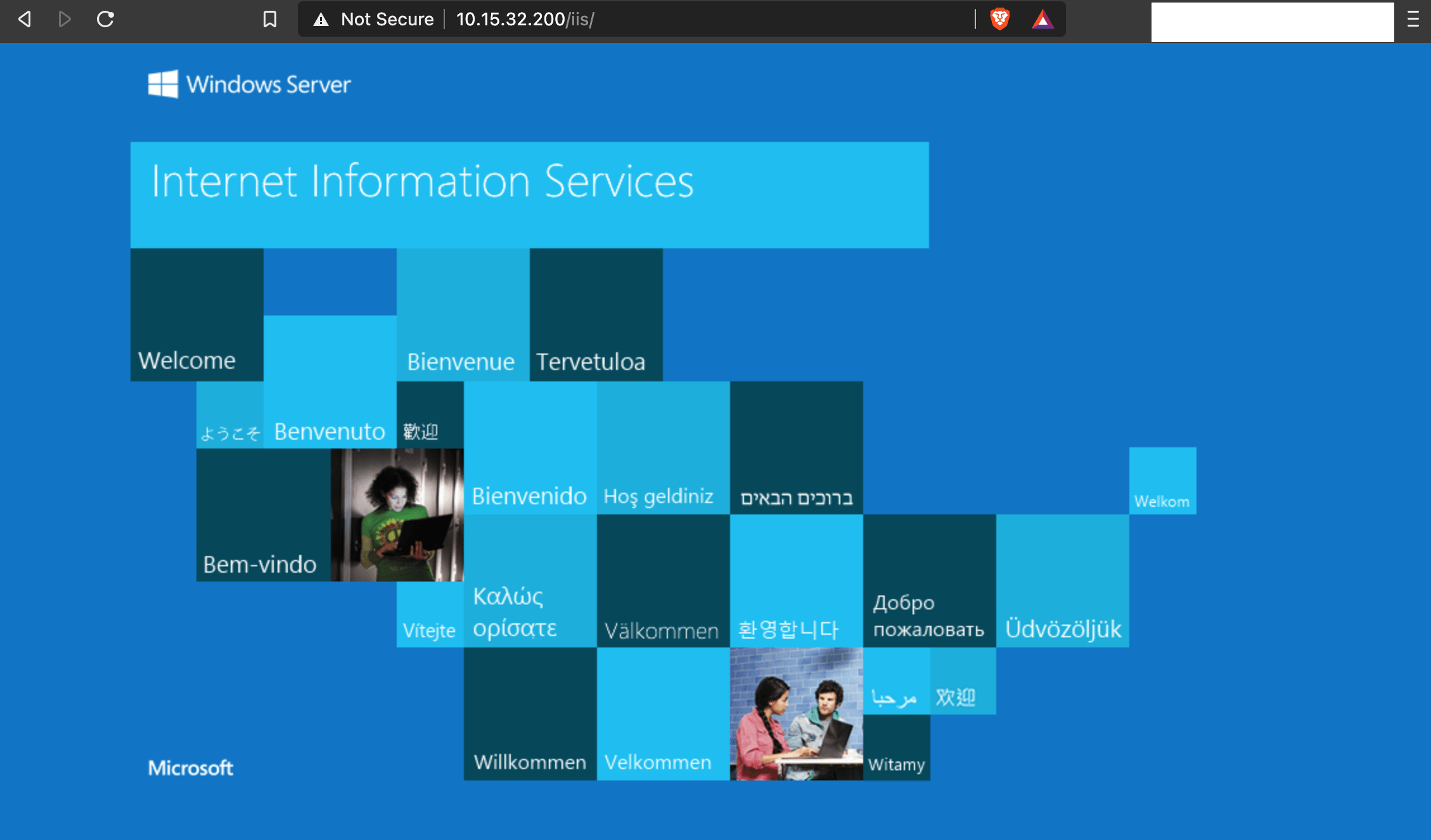

It loads, although the main image doesn’t. This appears to be because the Ingress controller is exposing the path directly (i.e. passing /iis to the IIS backend) for the image. To work around this, we can update the Ingress object to the below: -

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name : iis

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2

spec:

rules:

- http:

paths:

- path: /iis(/|$)(.*)

pathType: Prefix

backend:

service:

name: iis

port:

number: 80

This uses a capture group, saying that anything that comes after the IIS path must also be passed to the Ingress backend as is. We can see the results of this below: -

Notice the trailing / after the /iis path in the URL. Without this, the image is still not visible.

With the above, we now have both Linux and Windows applications running on the same cluster!

Summary

Kubernetes is fast becoming the de facto way of deploying container-based applications. While Linux is still the easiest and preferred method for deploying applications on Kubernetes, this series has shown that it is perfectly possible to run container-based workloads on Windows. It has also showed that building and running a Windows worker in a Kubernetes cluster is not too dissimilar from running Linux workers.

We created our machine images (the basis for our control plane nodes and workers) using the same tools (Packer and Ansible). We created the cluster using Terraform using Cloud-Init, with a very similar approach to configuring and initializing the workers (both Windows and Linux). Finally, we deployed applications to both Linux and Windows, using the same tools (the Kubernetes command line) and resource definitions (Deployments, Services, Ingress).

All of this has enabled us to treat Linux and Windows almost equally. By doing this, the choice of how to create applications to run on Kubernetes is down to preference and language choice. For developers who are more comfortable with creating Windows-native applications, they no longer need to start from the beginning and rewrite/refactor their application to run inside a Linux container.

Hopefully this series has provided some benefit to those who want to use Windows in a Kubernetes environment, as well as those just curious to see how well they work together.

devops kubernetes kvm linux windows terraform packer ansible

technical kubernetes packer terraform

4210 Words

2021-01-09 19:25 +0000