35 minutes

Configuring BGP Anycast on Equinix Metal using Pulumi and Saltstack

If you have been following my previous posts for a while, you are likely to have seen posts on Saltstack, and more recently Pulumi. You may also be aware that I come from a networking background, meaning I’ll often deep dive on topics like BGP, anycast and routing protocols.

Around 18 months ago, I put together a post on providing anycast services with BGP. It covered what anycast is, how to provide anycast-based services with BGP, and also how to conditionally advertise your anycast IPs using ExaBGP.

This post will take it a step further, by using Pulumi to provision servers and BGP sessions on Equinix Metal, and Saltstack that will configure the servers to advertise routes via BGP to Equinix.

Equinix Metal

As mentioned in my previous post using Pulumi, Equinix Metal are a provider of bare metal servers and infrastructure. For those who need dedicated machines with no contention over CPU/memory/storage on the server, they are able to provision them with the same tooling that others would use to create a VPS/instance on other cloud providers (e.g. AWS, Digital Ocean, Linode).

I recently guested on a stream with David McKay/Rawkode, a developer advocate at Equinix Metal going through some of the networking options available when using their infrastructure. While we didn’t get into configuring BGP, you can at least get an idea of what Equinix Metal is like as a cloud/infrastructure provider: -

A big point to take away from this is that we are able to configure BGP within Equinix Metal, and advertise routes from the servers we create. This option isn’t always available in other cloud/infrastructure providers, or at minimum requiring your own IP space.

Anycast with ExaBGP

As mentioned in the introduction, I have covered using ExaBGP for Anycast before. The aim of this post is very similar to the previous Anycast post, except that there will be no user interaction involved (other than typing pulumi up).

We won’t go into great depth about how ExaBGP works, as this is already covered here.

Saltstack

In this post we will cover the Saltstack states and pillars to configure our servers, and the use of grains to retrieve information from the servers that we provision.

For the impatient among you (I’m one of them!), you can view all of these in this repository.

As a brief refresher, or for those who are used to other configuration management systems, the following terms are used in Saltstack: -

- states - They contain the tasks that need to run. Similar to Ansible Playbooks, Puppet Manifests or Chef Cookbooks

- pillars - These are variables which can be used in our states and templates. These are similar to Ansible’s host variables

- grains - These are per-node facts, that are derived from the hosts themselves (e.g. host operating system, IP addresses etc)

- You can also set custom grains which will can then be referenced in your state or pillars

- minions - Hosts running the Salt Minion agent, that register with the master

States

In our states directory, we have the following top file (i.e. the file which determines what states apply to what nodes): -

base:

'G@os:Ubuntu or G@os:Debian':

- match: compound

- base

'bgp-*':

- pip

- network

- exabgp

- nginx

The first section installs some base packages on any minion running Ubuntu or Debian. None of the packages are relevant to the anycast deployment (other than tcpdump, if you want to run packet captures to prove where traffic is being forwarded).

The second section matches nodes with a nodename (often the same as the hostname, but not a requirement) that starts with bgp. If a node is called bgp-paris or bgp-01 or bgp-meshuggah, they would all be matched by this. However a node called more-bgp-01 wouldn’t match.

The pip state

python3-pip:

pkg.installed

As you can see, this just installs the python3-pip package. Without this, we cannot install ExaBGP (as it is packaged as a Python PIP module).

The network state

{% for interface in pillar['network']['interfaces'] %}

{{ interface['name'] }}:

network.managed:

- enabled: True

{%- if interface['type'] is defined %}

- type: {{ interface['type'] }}

{%- endif %}

{%- if interface['proto'] is defined %}

- proto: {{ interface['proto'] }}

{%- endif %}

- ipaddr: {{ interface['ipv4']['address'] }}

- netmask: {{ interface['ipv4']['mask'] }}

{%- if interface['ipv4']['gateway'] is defined %}

- gateway: {{ interface['ipv4']['gateway'] }}

{%- endif %}

{%- if interface['ipv4']['nameservers'] is defined %}

- dns:

{% for ns in interface['ipv4']['nameservers'] %}

- {{ ns }}

{% endfor %}

{%- endif %}

{%- if interface['routes'] is defined %}

routes_{{ interface['name'] }}:

network.routes:

- name: {{ interface['name'] }}

- routes:

{%- for route in interface['routes'] %}

- name: {{ route['name'] }}

ipaddr: {{ route['prefix'] }}

netmask: {{ route['mask'] }}

gateway: {{ route['gateway'] }}

{%- endfor %}

{%- endif %}

{% endfor %}

While this initially looks quite complex, it is mostly if statements on whether to supply a value for each field in the the Saltstack network.managed module to create network interfaces. Not every field needs to be defined, or should (e.g. a loopback interface doesn’t need a default gateway or a nameserver).

We can add static routes as well, if we want traffic to take a different path than the default gateway.

The exabgp state

exabgp:

pip.installed

/etc/exabgp:

file.directory:

- user: root

- group: root

- mode: 755

- makedirs: True

/etc/exabgp/scripts:

file.directory:

- user: root

- group: root

- mode: 755

- makedirs: True

{% if not salt['file.is_fifo']('/var/run/exabgp.in') %}

exabgp_in_fifo:

cmd.run:

- name: mkfifo /var/run/exabgp.in

{% endif %}

{% if not salt['file.is_fifo']('/var/run/exabgp.out') %}

exabgp_out_fifo:

cmd.run:

- name: mkfifo /var/run/exabgp.out

{% endif %}

/etc/systemd/system/exabgp.service:

file.managed:

- source: salt://exabgp/files/exabgp.service.j2

- user: root

- group: root

- mode: 0644

- template: jinja

/etc/exabgp/exabgp.conf:

file.managed:

- source: salt://exabgp/files/exabgp.conf.j2

- user: root

- group: root

- mode: 0644

- template: jinja

exabgp_scripts:

file.recurse:

- name: /etc/exabgp/scripts

- source: salt://exabgp/scripts

- user: root

- group: root

- file_mode: 0755

- template: jinja

exabgp_systemd_reload:

cmd.run:

- name: systemctl daemon-reload

- onchanges:

- file: /etc/systemd/system/exabgp.service

exabgp.service:

service.running:

- enable: True

- reload: True

- restart: True

- watch:

- file: /etc/exabgp/exabgp.conf

- file: /etc/systemd/system/exabgp.service

- file: exabgp_scripts

There are a few parts to this: -

- We install ExaBGP using Python’s PIP

- We create the

/etc/exabgpand/etc/exabgp/scriptsdirectory- The base directory contains global ExaBGP configuration

- The scripts directory contains check scripts that test if applications are functioning correctly

- We create named pipes using

mkfifothat will be used by ExaBGP itself for communication - We create a SystemD unit file to manage the startup/shutdown of ExaBGP

- We create the

exabgp.conffile with all of the necessary ExaBGP configuration (including BGP peers) - We make sure to run a

daemon-reloadon the ExaBGP service if the ExaBGP service file changes (ensuring we are running on the most recent version of the service) - Finally, we make sure the service is enabled, reloaded and restarted if any of the ExaBGP files change (i.e. service, configuration or scripts)

Unfortunately we must perform a restart on ExaBGP if the scripts or configuration changes, as it doesn’t pick up the changes automatically.

The SystemD unit file is found in files/exabgp.service.j2, and looks like the below: -

[Unit]

Description=ExaBGP

After=network.target

ConditionPathExists=/etc/exabgp/exabgp.conf

[Service]

Environment=exabgp_daemon_daemonize=false

Environment=ETC=/etc

ExecStart=/usr/local/bin/exabgp /etc/exabgp/exabgp.conf

ExecReload=/bin/kill -USR1 $MAINPID

[Install]

WantedBy=multi-user.target

The files/exabgp.conf.j2 file looks like the below: -

process announce-routes {

run /etc/exabgp/scripts/{{ pillar['exabgp']['check_script']['name'] }};

encoder {{ pillar['exabgp']['check_script']['encoder'] }};

}

{% for peer in pillar['exabgp']['peers'] %}

neighbor {{ peer['ip'] }} {

local-address {{ grains['bgp']['localip'] }};

local-as {{ pillar['exabgp']['asn'] }};

peer-as {{ peer['asn'] }};

api {

processes [ announce-routes ];

}

}

{% endfor %}

In this, we define the process (announce-routes) and which script controls the route announcements and withdrawals. We also specify the encoder. This is either text or json, depending on the format of the message returned by the script.

The last section creates the BGP peering sessions. It loops over a list of peers in the pillars for the node, creating a neighbor statement with the local autonomous system number, Equinix’s autonomous system number, and also the local address. This is sourced from the node’s grains, which will covered in more detail in the Pulumi section.

We also have our scripts, which look like the below: -

scripts/nginx-check.sh

#!/bin/bash

while true; do

curl -s localhost:80 > /dev/null;

if [[ $? != 0 ]]; then

{%- for ip in grains['anycast']['ipv4'] %}

echo "withdraw route {{ ip['address'] }} next-hop {{ grains['bgp']['localip'] }}\n"

{%- endfor %}

else

{%- for ip in grains['anycast']['ipv4'] %}

echo "announce route {{ ip['address'] }} next-hop {{ grains['bgp']['localip'] }}\n"

{%- endfor %}

fi

sleep 5

done

scripts/dns-check.sh

#!/bin/bash

while true; do

/usr/bin/dig yetiops.net @127.0.0.1 > /dev/null;

if [[ $? != 0 ]]; then

{%- for ip in grains['anycast']['ipv4'] %}

echo "withdraw route {{ ip['address'] }} next-hop {{ grains['bgp']['localip'] }}\n"

{%- endfor %}

else

{%- for ip in grains['anycast']['ipv4'] %}

echo "announce route {{ ip['address'] }} next-hop {{ grains['bgp']['localip'] }}\n"

{%- endfor %}

fi

sleep 5

done

Both are very similar, in that they check the status of a command (either doing a cURL request against the local webserver, or a dig request against the local DNS server). The commands run inside of a while loop, making sure they are continuously evaluated. On every iteration, we check to see if the exit code of the command is not 0 (i.e. did the command fail).

If the command succeeded (e.g. the cURL request returned a page, or the DNS request returned an A record) then we return the text announce route $ROUTE next-hop $LOCAL-IP for each route we want to announce. If the command fails (e.g. the cURL request was refused, or the DNS request returned no result) then we return the text withdraw route $ROUTE next-hop $LOCAL-IP for each route to want to stop announcing.

Finally, we sleep for 5 seconds between each check, otherwise we may risk overloading ExaBGP with route announcements/withdrawals.

The key point here is that we are using grains rather than pillars. We will see in the Pulumi section why this is relevant, but in summary this is so that the code is reusable and not tied to specific IPs, and doesn’t require updating Salt pillars manually after provisioning our machines.

The nginx state

Finally, we have the nginx state: -

nginx:

pkg.installed

/etc/nginx/nginx.conf:

file.managed:

- source: salt://nginx/files/nginx.conf.j2

- user: root

- group: root

- mode: '0644'

- template: jinja

/etc/nginx/sites-available/default:

file.managed:

- source: salt://nginx/files/default.j2

- user: root

- group: root

- mode: '0644'

- template: jinja

nginx.service:

service.running:

- watch:

- file: /etc/nginx/nginx.conf

- file: /etc/nginx/sites-available/default

/var/www/html/index.nginx-debian.html:

file.absent

/var/www/html/index.html:

file.managed:

- source: salt://nginx/files/index.html.j2

- user: root

- group: root

- mode: '0644'

- template: jinja

In this state, we do the following: -

- We install

nginx - We update the

nginx.confconfiguration file using a template - We update the

defaultserver configuration using a template - We make sure

nginxis running- We also watch for changes in the configuration file or default server file, reloading if they do change

- We remove the default Debian

nginxindex file - We place our own

index.htmlfile using a template

The files/nginx.conf.j2 file looks like the below: -

user www-data;

worker_processes auto;

pid /run/nginx.pid;

include /etc/nginx/modules-enabled/*.conf;

events {

worker_connections 768;

# multi_accept on;

}

http {

##

# Basic Settings

##

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

# server_tokens off;

# server_names_hash_bucket_size 64;

# server_name_in_redirect off;

include /etc/nginx/mime.types;

default_type application/octet-stream;

##

# SSL Settings

##

ssl_protocols TLSv1 TLSv1.1 TLSv1.2; # Dropping SSLv3, ref: POODLE

ssl_prefer_server_ciphers on;

##

# Logging Settings

##

access_log /var/log/nginx/access.log;

error_log /var/log/nginx/error.log;

##

# Gzip Settings

##

gzip on;

# gzip_vary on;

# gzip_proxied any;

# gzip_comp_level 6;

# gzip_buffers 16 8k;

# gzip_http_version 1.1;

# gzip_types text/plain text/css application/json application/javascript text/xml application/xml application/xml+rss text/javascript;

##

# Virtual Host Configs

##

include /etc/nginx/conf.d/*.conf;

include /etc/nginx/sites-enabled/*;

}

The files/default.j2 file looks like the below: -

server {

listen 127.0.0.1:80;

{%- for ip in grains['anycast']['ipv4'] %}

listen {{ ip['address'] }}:80;

{%- endfor %}

listen [::]:80 default_server;

root /var/www/html;

server_name _;

location / {

# First attempt to serve request as file, then

# as directory, then fall back to displaying a 404.

try_files $uri $uri/ =404;

}

}

In this, we ensure that the default site listens on 127.0.0.1:80 (localhost, for our ExaBGP check script) and also on our anycast IPs.

Last, we have our files/index.html.j2 file: -

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx on {{ grains['nodename'] }}!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working serving from {{ grains['nodename'] }}. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

This is the standard nginx index page, but with our nodename displayed. This allows us to see which node our traffic to the anycast IP is forwarded to.

Pillars

Now that we have our states defined, we can cover our pillars.

The top.sls file for our pillars looks like the below: -

base:

'bgp-*':

- exabgp.bgp

- bind.bgp

- network.bgp

While we aren’t using bind in this post, the states and pillars exist for it in our repository so that you can change between serving nginx and running anycast DNS.

The exabgp pillar

In the exabgp/bgp.sls file, we have the following: -

exabgp:

asn: 65000

check_script:

name: "nginx-check.sh"

encoder: text

peers:

- ip: 169.254.255.1

asn: 65530

- ip: 169.254.255.2

asn: 65530

Why are we hardcoding the peer values? This is because when you use Equinix Metal, you always peer with the same IPs no matter where you provision your servers. Therefore we do not need to derive what IPs we will peer with at runtime, as it is always the same.

Also, when using the Local BGP option with Equinix Metal, you always use the autonomous system number 65000 for your BGP process, and the Equinix Metal BGP sessions will always use the autonomous system number 65530.

This makes it quite straightforward in terms of what values we need to derive when creating our BGP sessions, as we already know most of them before they are even provisioned.

We also define our check_script, in this case the nginx-check.sh script, as we are using nginx to prove the anycast functionality.

The network pillar

In the network/bgp.sls file, we have the following: -

network:

interfaces:

{% for ip in grains['anycast']['ipv4'] %}

- name: "lo:{{ loop.index }}"

type: eth

proto: static

ipv4:

address: {{ ip['address'] }}

mask: {{ ip['mask'] }}

{% endfor %}

This is used to discover values from the node grains. We could also do directly in the state files (i.e. referring to the grains in templates/state files). However using the above allows us to set additional interfaces not derived from grains, and the states don’t need to care whether the values are originally grains or manually set in pillars.

Pulumi

Now that we have our Salt states and pillars defined, we can create the following: -

- Two servers that will serve

nginx, and advertise anycast IPs to Equinix - A single Salt Master for the above servers to register with

- The Salt Master will then configure the other two servers with ExaBGP and

nginx

- The Salt Master will then configure the other two servers with ExaBGP and

If you want to see the Pulumi code first, go here.

If you haven’t used Pulumi before, or Equinix Metal, then see my Pulumi Introduction post and the Equinix Metal section of my Pulumi with other providers post.

Steps required

As a brief summary, we need to do the following with our Pulumi code: -

- Create an Equinix Metal project, and enable BGP on the project

- Add an SSH key to the project

- Create a server running as a Salt Master, using

cloud-initfor first-time boot configuration - Reserve a single Equinix-owned IPv4 address that we can use as our anycast IP

- I don’t own any IPv4 or IPv6 blocks, hence the need to use Equinix’s IP space

- Create two servers that will serve

nginx, and advertise our anycast IPv4 address back to Equinix (so they know which server(s) to send it to) - Create Equinix IPv4 BGP sessions to each server, otherwise we have nothing to peer with!

Equinix Metal doesn’t provide firewalling or anything similar, meaning that if you want to secure your systems you can either: -

- Run host-based firewalling

- Provision Equinix Metal machines that serve as the security boundary to your other machines (effectively acting as a firewall(s) for the other servers)

As we have already covered the basics of Pulumi and using it with Equinix Metal in previous posts, I won’t cover how to set up a stack, a Pulumi project or how to generate credentials to use with Equinix Metal.

The Equinix Metal project

As I have been doing a lot with Equinix Metal recently, it makes sense to have an overall/“root” project, rather than projects that are created and deleted every time I run Pulumi or destroy resources in Pulumi.

To do this, we use something like the below: -

package main

import (

metal "github.com/pulumi/pulumi-equinix-metal/sdk/v2/go/equinix"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

)

func main() {

pulumi.Run(func(ctx *pulumi.Context) error {

// Create an Equinix Metal resource (Project)

project, err := metal.NewProject(ctx, "pulumi-yetiops", &metal.ProjectArgs{

Name: pulumi.String("pulumi-yetiops"),

BgpConfig: metal.ProjectBgpConfigArgs{

Asn: pulumi.Int(65000),

DeploymentType: pulumi.String("local"),

},

})

if err != nil {

return err

}

// Export the name of the project

ctx.Export("projectName", project.Name)

ctx.Export("projectId", project.ID())

return nil

})

}

The above creates a project called pulumi-yetiops and enables BGP, using an autonomous system number of 65000 and a BGP type of local. Local means we are going to use Equinix’s own IP addresses to advertise back to them, rather than any we may own.

An important point to note is that this project is created in it’s own Pulumi stack, which we can then reference in other stacks. If you are familiar with Terraform, this is similar to referencing remote states.

Referencing the project

After creating a stack specific to the anycast BGP deployment, we reference the project we created above: -

func main() {

pulumi.Run(func(ctx *pulumi.Context) error {

conf := config.New(ctx, "")

commonName := conf.Require("common_name")

rootStack, err := pulumi.NewStackReference(ctx, "yetiops/equinix-metal-yetiops/staging", nil)

if err != nil {

return err

}

As in the previous posts, we have our commonName variable that we can use across our resources. We can now focus on the pulumi.NewStackReference function.

In this, we pass in the context (standard for Pulumi with Go). We then reference the stack name for our overall Equinix Metal project. From herein, we can now refer to the Export values/Outputs from the root project. An example of this is below: -

projectId := rootStack.GetStringOutput(pulumi.String("projectId"))

In the above, we get a string output of the root project’s projectId value (which we created using ctx.Export("projectId", project.ID()) in the root project). Now if the root project ID changes, this stack will be aware of the change.

Supplying a Project SSH key

Next, we add an SSH key to our project that will allow us to login to the servers (and also their serial consoles): -

user, err := user.Current()

if err != nil {

return err

}

sshkey_path := fmt.Sprintf("%v/.ssh/id_rsa.pub", user.HomeDir)

sshkey_file, err := ioutil.ReadFile(sshkey_path)

if err != nil {

return err

}

sshkey_contents := string(sshkey_file)

sshkey, err := metal.NewProjectSshKey(ctx, commonName, &metal.ProjectSshKeyArgs{

Name: pulumi.String(commonName),

PublicKey: pulumi.String(sshkey_contents),

ProjectId: projectId,

})

As in the previous Pulumi posts, this gets the home directory of the current user running Pulumi, sources their RSA-based SSH key, and then adds it to the project.

Using cloud-init

In previous posts on Pulumi, I provided UserData (i.e. first-boot configuration) using either strings containing a small amount of cloud-config YAML or referenced a YAML file with the required cloud-config. However this can be a bit limiting in terms of how we provide variables, templated values and conditional logic.

Instead I am going to use the Juju Cloud-Init module so that generating the UserData is more flexible, and keeps our Pulumi stack entirely in Go.

The inspiration for this is the Tinkerbell Testing Infrastructure code created by David McKay/Rawkode (who also happens to be a fan of Saltstack and Pulumi!).

The salt-master

The cloud-config function for the salt-master is defined as follows: -

func saltMasterCloudInitConfig() string {

c, err := cloudinit.New("focal")

if err != nil {

panic(err)

}

c.AddRunCmd("curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh")

c.AddRunCmd("sh install_salt.sh -P -M -x python3")

c.AddRunTextFile("/etc/salt/master.d/master.conf", `autosign_grains_dir: /etc/salt/autosign-grains

fileserver_backend:

- roots

- gitfs

gitfs_remotes:

- https://gitlab.com/stuh84/salt-anycast-equinix:

- root: states

- base: main

- update_interval: 120

pillar_roots:

base:

- /srv/salt/pillars

ext_pillar:

- git:

- main https://gitlab.com/stuh84/salt-anycast-equinix:

- root: pillars

- env: base`, 0644)

c.AddRunTextFile("/etc/salt/minion.d/minion.conf", `autosign_grains:

- role

startup_states: highstate

grains:

role: master`, 0644)

c.AddRunCmd("mkdir -p /etc/salt/autosign-grains")

c.AddRunCmd("echo -e \"master\nbgp\n\" > /etc/salt/autosign-grains/role")

c.AddRunCmd("PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')")

c.AddRunCmd("mkdir -p /srv/salt")

c.AddRunCmd("echo interface: ${PRIVATE_IP} > /etc/salt/master.d/private-interface.conf")

c.AddRunCmd("echo master: ${PRIVATE_IP} > /etc/salt/minion.d/master.conf")

c.AddRunCmd("systemctl daemon-reload")

c.AddRunCmd("systemctl enable salt-master.service")

c.AddRunCmd("systemctl restart --no-block salt-master.service")

c.AddRunCmd("systemctl enable salt-minion.service")

c.AddRunCmd("systemctl restart --no-block salt-minion.service")

script, err := c.RenderScript()

if err != nil {

panic(err)

}

return script

}

To explain what we are doing here: -

- We create a new CloudInit definition

- The

focalreference works for both Ubuntu and Debian

- The

- We use

AddRunCmdfunctions (i.e. those that run only on first boot, rather than every boot) to install Salt, and make this machine a Salt Master - We create a

master.conffile to instruct the Salt Master to: -- Add autosigned grains based upon a node’s role (full explanation below)

- Use the root file system, and also

gitfs(i.e. mounting a Git repository as a file system) - Specify our Salt repository as a

gitfs_remote, meaning it will be mounted and used by Salt to provide states- We also tell it to update every 120s, to allow changes in the repository to be reflected on the file system

- Provide our standard pillars directory

- Reference the

pillarsdirectory in our Salt repository as an external pillar, allowing us to update the pillars directly through Git

- We add a minion configuration file which says to: -

- Autosign our role grain

- When the minion is accepted by the master, automatically run a high state (i.e. apply all configuration specific to this node)

- Add a grain of our role called

master

- We create our

autosign_grains_dirdirectory - We place inside it a file called role, containing the lines

masterandbgp - We derive our private IPv4 address from the Equinix Metal metadata service

- We specify that Salt should only accept minions on our private interface (i.e. the interface with our private IP)

- We specify to the Minion that it should communicate with the master on the same private IPv4 address

The subsequent steps run a SystemD daemon-reload to ensure we are taking into account the latest SystemD unit files, and then we enable and restart the salt-master and salt-minion services.

Finally, we render all of our steps into a cloud-init script and return it.

To explain what Grain Autosigning is, this is where a Salt Master will automatically accept any Minion that has a Grain that matches those specified in the files in the autosign-grains directory. For example, our autosign-grains/role file will look like the following: -

master

bgp

Any minion that has a grain of role with a value of master or bgp is automatically accepted. Without this, you need to manually login to the salt-master and then accept the minions using something like salt-key -a 'salt-master.example.com' or salt-key -a 'bgp-01*'.

So long as we have a grain called role, and the contents of this grain match either master or bgp, the minion will then be accepted automatically (notice in the minion.conf file creation, we add the role grain).

In a production scenario, you need to ensure that either your master/minion communication is completely private or that the accepted grain values are sufficiently unique (e.g. a UUID value) and masked/encrypted in your repository, otherwise any server running a Salt minion that has these grains could be accepted by the master. Depending on your Salt setup, this could provide anything from basic information about your infrastructure to user creation that allows connectivity to other areas of your network.

Referencing the function

To reference this function in our code, we use the following: -

saltCloudInit := saltMasterCloudInitConfig()

As the return type is a string, we can use this directly in the Equinix Device creation functions like so: -

UserData: pulumi.String(saltCloudInit)

The bgp/anycast nodes

The function we use for the anycast nodes is similar to the salt-master Cloud-Init creation function. However we do need to supply a few variables to the function for ExaBGP and the anycast IP to be configured.

We do this by creating a struct, and allowing our function to have an instance of this struct passed to it.

type BgpNodeConfig struct {

anycastIP string

anycastIPMask string

saltMasterIP string

}

func bgpCloudInitConfig(config *BgpNodeConfig) string {

c, err := cloudinit.New("focal")

if err != nil {

panic(err)

}

c.AddRunCmd("curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh")

c.AddRunCmd("sh install_salt.sh -P -x python3")

c.AddRunCmd("PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')")

c.AddRunCmd(fmt.Sprintf("echo master: %s > /etc/salt/minion.d/master.conf", config.saltMasterIP))

c.AddRunTextFile("/etc/salt/grains",

fmt.Sprintf(

"anycast:\n ipv4:\n - address: %s\n mask: %s\n",

config.anycastIP,

config.anycastIPMask,

), 0400,

)

c.AddRunTextFile("/etc/salt/minion.d/minion.conf", `autosign_grains:

- role

startup_states: highstate`, 0644)

c.AddRunCmd("echo \"bgp:\n localip: ${PRIVATE_IP}\nrole: bgp\" >> /etc/salt/grains")

c.AddRunCmd("systemctl daemon-reload")

c.AddRunCmd("systemctl enable salt-minion.service")

c.AddRunCmd("systemctl restart --no-block salt-minion.service")

script, err := c.RenderScript()

if err != nil {

panic(err)

}

return script

}

The differences between this and the saltMasterCloudInitConfig function are as follows: -

- We create a

BgpNodeConfigstruct, which will be used to provide the anycast IPv4 address, it’s subnet mask, and the IPv4 address of the Salt Master - We create the

bgpCloudInitConfigfunction, which requires an input variable (an instance of theBgpNodeConfigstruct) and returns thecloud-initscript as a string - We install

salt, but omit the-Mflag so that we don’t make this machine a master as well - We create our

/etc/salt/grainsfile, and populate it with our anycast IPv4 address and subnet mask - We use a similar minion configuration file as for the master, except we are placing our grains in a separate file

- We append the private IPv4 address as a grain to our

/etc/salt/grains/file as thebgp.localipgrain, as well as adding ourbgprole grain (used to autosign this minion) - Finally, we run a SystemD

daemon-reload, and then enable and restart thesalt-minionprocess

We render the cloud-init script, and return it as a string.

Because the local IP, Salt Master IP and Anycast IP address and mask will only be known after the machines are provisioned, we must ensure that we are not hardcoding values into the cloud-init templates. This avoids them being out-of-date/invalid if we recreate our infrastructure.

Another point to note is nothing is specific to each node in this function, so we can use the same generated cloud-init script for one, two or ten BGP-speaking anycast nodes.

Referencing the function

Unlike the Salt Master cloud-init script, we must supply a few variables that are only known at the time of being provisioned. To do this, we use something like the below: -

bgpCloudConfig := pulumi.All(

anycastIpAllocation.Address,

anycastIpAllocation.Netmask,

saltMaster.AccessPrivateIpv4).ApplyT(

func(args []interface{}) string {

bgpCloudInitNodeConfig := BgpNodeConfig{

anycastIP: args[0].(string),

anycastIPMask: args[1].(string),

saltMasterIP: args[2].(string),

}

return bgpCloudInitConfig(&bgpCloudInitNodeConfig)

})

In the above we are:-

- Using an All Pulumi function to supply values not yet known (i.e. the Anycast IPv4 details and Salt Master IPv4 address) to an Apply function

- The Apply function will run after the creation of the Salt Master server and the Anycast IP allocation

- It creates an instance of the

BgpNodeConfigstruct, with the values derived from the provisioned anycast IP and Salt Master - This instance of the struct is then passed to the

bgpCloudInitConfigfunction - The function returns the

cloud-initscript ready to pass to our BGP nodes, containing the correct anycast IP and Salt Master details

As mentioned, we don’t know the details required by the function until Pulumi runs, at which point they are considered Pulumi Outputs. The Juju cloudinit module has no concept of a Pulumi Output (i.e. it cannot natively evaluate a Pulumi Output type). Therefore we use the Pulumi Apply function to take the Output values, turn them into a more common type (e.g. a string or int) to pass to our cloud-init function.

The bgpCloudConfig will be of a Pulumi Output type after the Apply function runs, meaning that Pulumi is aware of the context and history of creating and evaluating the other Pulumi Outputs (i.e. the anycast IP details and Salt Master IP).

Creating the salt-master

Now that we have the correct cloud-init data for provisioning a salt-master, we can create one like so: -

saltMaster, err := metal.NewDevice(ctx, "salt-master", &metal.DeviceArgs{

Hostname: pulumi.String("salt-master"),

Plan: pulumi.String("c3.small.x86"),

Metro: pulumi.String("am"),

OperatingSystem: pulumi.String("ubuntu_20_04"),

BillingCycle: pulumi.String("hourly"),

ProjectId: projectId,

ProjectSshKeyIds: pulumi.StringArray{

sshkey.ID(),

},

UserData: pulumi.String(saltCloudInit),

})

if err != nil {

return err

}

A small difference between the previous Pulumi post and this one is that Equinix Metal now use the term Metro rather than Facility to refer to the location you create infrastructure in. The reason for this is Equinix Metal can now provide VLANs, elastic IPs and Private IPv4 connectivity across multiple facilities in a geographic area, rather than being limited to a single location. If you are familiar with AWS, think of a Metro like a Region, and a Facility like an Availability Zone.

This has the added benefit that more capacity is available across multiple facilities, without being limited by network topology (i.e. VLANs being restricted to a single facility) between the infrastructure you provision.

Other than this, the above is very similar to other servers we have created in Equinix Metal. We pass in our saltCloudInit variable, which contains the cloud-init script generated by the saltMasterCloudInitConfig function.

Creating the anycast IP

The anycast IP uses Equinix’s NewReservedIPBlock function to reserve a single IP from the metro’s IPv4 allocation. If you have your own IP space and use the Global BGP option when adding BGP to the initial Equinix Metal project, you are not limited by the metro as to where you can advertise the IPs from.

anycastIpAllocation, err := metal.NewReservedIpBlock(ctx, "anycast-ip", &metal.ReservedIpBlockArgs{

ProjectId: projectId,

Metro: pulumi.String("am"),

Type: pulumi.String("public_ipv4"),

Quantity: pulumi.Int(1),

})

if err != nil {

return err

}

The above allocates a single IPv4 address in the Equinix Metal am (Amsterdam) metro. We specify public_ipv4, as the other option (global_ipv4) is only valid if deploying directly to a facility.

Creating our anycast nodes and BGP sessions

Now that we have our anycast IP, and we have generated our cloud-init script, we can create our anycast nodes and the BGP sessions from Equinix to the nodes: -

bgpPrimary, err := metal.NewDevice(ctx, "bgp-01", &metal.DeviceArgs{

Hostname: pulumi.String("bgp-01"),

Plan: pulumi.String("c3.small.x86"),

Metro: pulumi.String("am"),

OperatingSystem: pulumi.String("debian_10"),

BillingCycle: pulumi.String("hourly"),

ProjectId: projectId,

ProjectSshKeyIds: pulumi.StringArray{

sshkey.ID(),

},

UserData: pulumi.StringOutput(pulumi.Sprintf("%v", bgpCloudConfig)),

})

if err != nil {

return err

}

bgpPrimarySession, err := metal.NewBgpSession(ctx, "bgp-01_BGP_SESSION", &metal.BgpSessionArgs{

AddressFamily: pulumi.String("ipv4"),

DeviceId: bgpPrimary.ID(),

})

if err != nil {

return err

}

In the device creation, we are using Debian Buster rather than Ubuntu 20.04 LTS (which we used for the ‘salt-master’), mainly due to ease of managing networks on Debian Buster currently. Also, we must turn the bgpCloudConfig Pulumi Output into a Pulumi String type (a StringOutput is fine in this context) as the UserData field doesn’t accept values of type Pulumi Output (only Pulumi String types).

After this, we create an IPv4 BGP session that is associated with this server.

The second anycast node we create is identical to this, except we use the word Secondary instead of Primary, and 02 instead of 01 for the hostname and resource names.

All the code

We have the code in two separate files, one called main.go (which contains all of the Equinix Metal-specific code) and one called cloud-init.go that contains all of the cloud-init-specific code.

main.go

package main

import (

"fmt"

"io/ioutil"

"os/user"

metal "github.com/pulumi/pulumi-equinix-metal/sdk/v2/go/equinix"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi"

"github.com/pulumi/pulumi/sdk/v3/go/pulumi/config"

)

func main() {

pulumi.Run(func(ctx *pulumi.Context) error {

conf := config.New(ctx, "")

commonName := conf.Require("common_name")

rootStack, err := pulumi.NewStackReference(ctx, "yetiops/equinix-metal-yetiops/staging", nil)

if err != nil {

return err

}

projectId := rootStack.GetStringOutput(pulumi.String("projectId"))

user, err := user.Current()

if err != nil {

return err

}

sshkey_path := fmt.Sprintf("%v/.ssh/id_rsa.pub", user.HomeDir)

sshkey_file, err := ioutil.ReadFile(sshkey_path)

if err != nil {

return err

}

sshkey_contents := string(sshkey_file)

sshkey, err := metal.NewProjectSshKey(ctx, commonName, &metal.ProjectSshKeyArgs{

Name: pulumi.String(commonName),

PublicKey: pulumi.String(sshkey_contents),

ProjectId: projectId,

})

saltCloudInit := saltMasterCloudInitConfig()

saltMaster, err := metal.NewDevice(ctx, "salt-master", &metal.DeviceArgs{

Hostname: pulumi.String("salt-master"),

Plan: pulumi.String("c3.small.x86"),

Metro: pulumi.String("am"),

OperatingSystem: pulumi.String("ubuntu_20_04"),

BillingCycle: pulumi.String("hourly"),

ProjectId: projectId,

ProjectSshKeyIds: pulumi.StringArray{

sshkey.ID(),

},

UserData: pulumi.String(saltCloudInit),

})

if err != nil {

return err

}

anycastIpAllocation, err := metal.NewReservedIpBlock(ctx, "anycast-ip", &metal.ReservedIpBlockArgs{

ProjectId: projectId,

Metro: pulumi.String("am"),

Type: pulumi.String("public_ipv4"),

Quantity: pulumi.Int(1),

})

if err != nil {

return err

}

bgpCloudConfig := pulumi.All(

anycastIpAllocation.Address,

anycastIpAllocation.Netmask,

saltMaster.AccessPrivateIpv4).ApplyT(

func(args []interface{}) string {

bgpCloudInitNodeConfig := BgpNodeConfig{

anycastIP: args[0].(string),

anycastIPMask: args[1].(string),

saltMasterIP: args[2].(string),

}

return bgpCloudInitConfig(&bgpCloudInitNodeConfig)

})

if err != nil {

return err

}

bgpPrimary, err := metal.NewDevice(ctx, "bgp-01", &metal.DeviceArgs{

Hostname: pulumi.String("bgp-01"),

Plan: pulumi.String("c3.small.x86"),

Metro: pulumi.String("am"),

OperatingSystem: pulumi.String("debian_10"),

BillingCycle: pulumi.String("hourly"),

ProjectId: projectId,

ProjectSshKeyIds: pulumi.StringArray{

sshkey.ID(),

},

UserData: pulumi.StringOutput(pulumi.Sprintf("%v", bgpCloudConfig)),

})

if err != nil {

return err

}

bgpPrimarySession, err := metal.NewBgpSession(ctx, "bgp-01_BGP_SESSION", &metal.BgpSessionArgs{

AddressFamily: pulumi.String("ipv4"),

DeviceId: bgpPrimary.ID(),

})

if err != nil {

return err

}

bgpSecondary, err := metal.NewDevice(ctx, "bgp-02", &metal.DeviceArgs{

Hostname: pulumi.String("bgp-02"),

Plan: pulumi.String("c3.small.x86"),

Metro: pulumi.String("am"),

OperatingSystem: pulumi.String("debian_10"),

BillingCycle: pulumi.String("hourly"),

ProjectId: projectId,

ProjectSshKeyIds: pulumi.StringArray{

sshkey.ID(),

},

UserData: pulumi.StringOutput(pulumi.Sprintf("%v", bgpCloudConfig)),

})

if err != nil {

return err

}

bgpSecondarySession, err := metal.NewBgpSession(ctx, "bgp-02_BGP_SESSION", &metal.BgpSessionArgs{

AddressFamily: pulumi.String("ipv4"),

DeviceId: bgpSecondary.ID(),

})

if err != nil {

return err

}

ctx.Export("saltMasterIP", saltMaster.AccessPublicIpv4)

ctx.Export("bgpPrimaryIP", bgpPrimary.AccessPublicIpv4)

ctx.Export("bgpSecondaryIP", bgpSecondary.AccessPublicIpv4)

ctx.Export("bgpPrimarySessionStatus", bgpPrimarySession.Status)

ctx.Export("bgpSecondarySessionStatus", bgpSecondarySession.Status)

ctx.Export("anycastIP", anycastIpAllocation.Address)

return nil

})

}

cloud-init.go

package main

import (

"fmt"

"github.com/juju/juju/cloudconfig/cloudinit"

)

type BgpNodeConfig struct {

anycastIP string

anycastIPMask string

saltMasterIP string

}

func saltMasterCloudInitConfig() string {

c, err := cloudinit.New("focal")

if err != nil {

panic(err)

}

c.AddRunCmd("curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh")

c.AddRunCmd("sh install_salt.sh -P -M -x python3")

c.AddRunTextFile("/etc/salt/master.d/master.conf", `autosign_grains_dir: /etc/salt/autosign-grains

fileserver_backend:

- roots

- gitfs

gitfs_remotes:

- https://gitlab.com/stuh84/salt-anycast-equinix:

- root: states

- base: main

- update_interval: 120

pillar_roots:

base:

- /srv/salt/pillars

ext_pillar:

- git:

- main https://gitlab.com/stuh84/salt-anycast-equinix:

- root: pillars

- env: base`, 0644)

c.AddRunTextFile("/etc/salt/minion.d/minion.conf", `autosign_grains:

- role

startup_states: highstate

grains:

role: master`, 0644)

c.AddRunCmd("mkdir -p /etc/salt/autosign-grains")

c.AddRunCmd("echo -e \"master\nbgp\n\" > /etc/salt/autosign-grains/role")

c.AddRunCmd("PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')")

c.AddRunCmd("mkdir -p /srv/salt")

c.AddRunCmd("echo interface: ${PRIVATE_IP} > /etc/salt/master.d/private-interface.conf")

c.AddRunCmd("echo master: ${PRIVATE_IP} > /etc/salt/minion.d/master.conf")

c.AddRunCmd("systemctl daemon-reload")

c.AddRunCmd("systemctl enable salt-master.service")

c.AddRunCmd("systemctl restart --no-block salt-master.service")

c.AddRunCmd("systemctl enable salt-minion.service")

c.AddRunCmd("systemctl restart --no-block salt-minion.service")

script, err := c.RenderScript()

if err != nil {

panic(err)

}

return script

}

func bgpCloudInitConfig(config *BgpNodeConfig) string {

c, err := cloudinit.New("focal")

if err != nil {

panic(err)

}

c.AddRunCmd("curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh")

c.AddRunCmd("sh install_salt.sh -P -x python3")

c.AddRunCmd("PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')")

c.AddRunCmd(fmt.Sprintf("echo master: %s > /etc/salt/minion.d/master.conf", config.saltMasterIP))

c.AddRunTextFile("/etc/salt/grains",

fmt.Sprintf(

"anycast:\n ipv4:\n - address: %s\n mask: %s\n",

config.anycastIP,

config.anycastIPMask,

), 0400,

)

c.AddRunTextFile("/etc/salt/minion.d/minion.conf", `autosign_grains:

- role

startup_states: highstate`, 0644)

c.AddRunCmd("echo \"bgp:\n localip: ${PRIVATE_IP}\nrole: bgp\" >> /etc/salt/grains")

c.AddRunCmd("systemctl daemon-reload")

c.AddRunCmd("systemctl enable salt-minion.service")

c.AddRunCmd("systemctl restart --no-block salt-minion.service")

script, err := c.RenderScript()

if err != nil {

panic(err)

}

return script

}

Provisioning

Now that we have created our Pulumi code and our Salt configuration files, we can run Pulumi and watch everything create!

Below is an Asciinema output of my terminal when running pulumi up, so you can see the resources being created: -

We can also take a look at what the generated cloud-init script files look like too: -

salt-master

#!/bin/bash

set -e

test -n "$JUJU_PROGRESS_FD" || (exec {JUJU_PROGRESS_FD}>&2) 2>/dev/null && exec {JUJU_PROGRESS_FD}>&2 || JUJU_PROGRESS_FD=2

(

function package_manager_loop {

local rc=

while true; do

if ($*); then

return 0

else

rc=$?

fi

if [ $rc -eq 100 ]; then

sleep 10s

continue

fi

return $rc

done

}

curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh

sh install_salt.sh -P -M -x python3

install -D -m 644 /dev/null '/etc/salt/master.d/master.conf'

printf '%s\n' 'autosign_grains_dir: /etc/salt/autosign-grains

fileserver_backend:

- roots

- gitfs

gitfs_remotes:

- https://gitlab.com/stuh84/salt-anycast-equinix:

- root: states

- base: main

- update_interval: 120

pillar_roots:

base:

- /srv/salt/pillars

ext_pillar:

- git:

- main https://gitlab.com/stuh84/salt-anycast-equinix:

- root: pillars

- env: base' > '/etc/salt/master.d/master.conf'

install -D -m 644 /dev/null '/etc/salt/minion.d/minion.conf'

printf '%s\n' 'autosign_grains:

- role

startup_states: highstate

grains:

role: master' > '/etc/salt/minion.d/minion.conf'

mkdir -p /etc/salt/autosign-grains

echo -e "master

bgp

" > /etc/salt/autosign-grains/role

PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')

mkdir -p /srv/salt

echo interface: ${PRIVATE_IP} > /etc/salt/master.d/private-interface.conf

echo master: ${PRIVATE_IP} > /etc/salt/minion.d/master.conf

systemctl daemon-reload

systemctl enable salt-master.service

systemctl restart --no-block salt-master.service

systemctl enable salt-minion.service

systemctl restart --no-block salt-minion.service

) 2>&1

bgp-01

#!/bin/bash

set -e

test -n "$JUJU_PROGRESS_FD" || (exec {JUJU_PROGRESS_FD}>&2) 2>/dev/null && exec {JUJU_PROGRESS_FD}>&2 || JUJU_PROGRESS_FD=2

(

function package_manager_loop {

local rc=

while true; do

if ($*); then

return 0

else

rc=$?

fi

if [ $rc -eq 100 ]; then

sleep 10s

continue

fi

return $rc

done

}

curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh

sh install_salt.sh -P -x python3

PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')

echo master: 10.12.60.1 > /etc/salt/minion.d/master.conf

install -D -m 400 /dev/null '/etc/salt/grains'

printf '%s\n' 'anycast:

ipv4:

- address: 147.75.32.0

mask: 255.255.255.255

' > '/etc/salt/grains'

install -D -m 644 /dev/null '/etc/salt/minion.d/minion.conf'

printf '%s\n' 'autosign_grains:

- role

startup_states: highstate' > '/etc/salt/minion.d/minion.conf'

echo "bgp:

localip: ${PRIVATE_IP}

role: bgp" >> /etc/salt/grains

systemctl daemon-reload

systemctl enable salt-minion.service

systemctl restart --no-block salt-minion.service

) 2>&1

bgp-02

#!/bin/bash

set -e

test -n "$JUJU_PROGRESS_FD" || (exec {JUJU_PROGRESS_FD}>&2) 2>/dev/null && exec {JUJU_PROGRESS_FD}>&2 || JUJU_PROGRESS_FD=2

(

function package_manager_loop {

local rc=

while true; do

if ($*); then

return 0

else

rc=$?

fi

if [ $rc -eq 100 ]; then

sleep 10s

continue

fi

return $rc

done

}

curl -fsSL https://bootstrap.saltproject.io -o install_salt.sh

sh install_salt.sh -P -x python3

PRIVATE_IP=$(curl -s https://metadata.platformequinix.com/metadata | jq -r '.network.addresses | map(select(.public==false)) | first | .address')

echo master: 10.12.60.1 > /etc/salt/minion.d/master.conf

install -D -m 400 /dev/null '/etc/salt/grains'

printf '%s\n' 'anycast:

ipv4:

- address: 147.75.32.0

mask: 255.255.255.255

' > '/etc/salt/grains'

install -D -m 644 /dev/null '/etc/salt/minion.d/minion.conf'

printf '%s\n' 'autosign_grains:

- role

startup_states: highstate' > '/etc/salt/minion.d/minion.conf'

echo "bgp:

localip: ${PRIVATE_IP}

role: bgp" >> /etc/salt/grains

systemctl daemon-reload

systemctl enable salt-minion.service

systemctl restart --no-block salt-minion.service

) 2>&1

Does it work?

If it didn’t work after creating all of the Pulumi code and Saltstack configuration, it would be disappointing wouldn’t it?

We shall verify functionality by testing first if we can reach the anycast IP in a browser. Then, we’ll stop the nginx service on the “active” node (i.e. whichever node the requests to the anycast IP are being forwarded to), and see if it fails over to the other node.

Can we reach it?

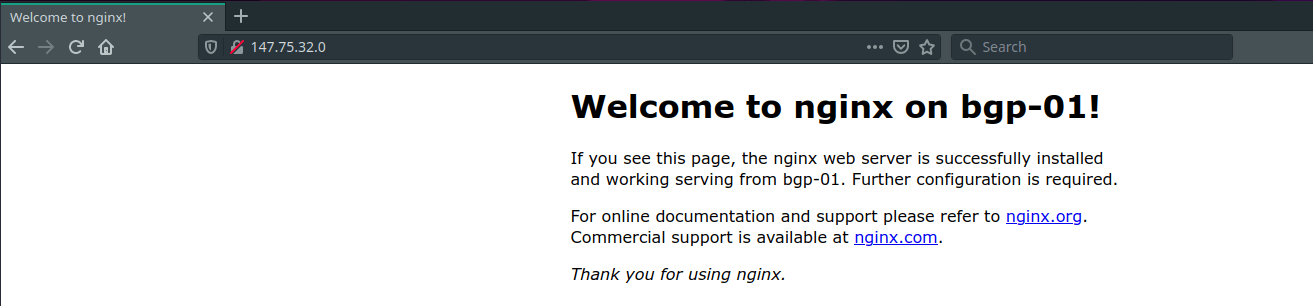

First, lets check that nginx is available on the anycast IP of 147.75.32.0: -

There it is! Other than running the pulumi up command, we have done nothing else to the provisioned servers. This proves that not only were the servers started, but that they sourced our Salt configuration correctly, discovered the correct grains for configuring ExaBGP and the anycast IP interface, and that the states ran with no intervention.

Taking the service down on the “active” node

We will now take down nginx on bgp-01 to make sure that ExaBGP will stop advertising the route when nginx isn’t available.

We can do this from the salt-master. While we could login to bgp-01 directly to take down the service, it means we don’t then have to login to bgp-02 to take down services if we need to: -

$ ssh [email protected]

Welcome to Ubuntu 20.04.2 LTS (GNU/Linux 5.4.0-71-generic x86_64)

* Documentation: https://help.ubuntu.com

* Management: https://landscape.canonical.com

* Support: https://ubuntu.com/advantage

System information as of Fri 14 May 2021 02:53:24 PM UTC

System load: 0.0

Usage of /: 0.5% of 438.11GB

Memory usage: 3%

Swap usage: 0%

Temperature: 39.0 C

Processes: 286

Users logged in: 0

IPv4 address for bond0: 145.40.96.7

IPv6 address for bond0: 2604:1380:4601:5200::1

* Pure upstream Kubernetes 1.21, smallest, simplest cluster ops!

https://microk8s.io/

46 updates can be installed immediately.

13 of these updates are security updates.

To see these additional updates run: apt list --upgradable

The programs included with the Ubuntu system are free software;

the exact distribution terms for each program are described in the

individual files in /usr/share/doc/*/copyright.

Ubuntu comes with ABSOLUTELY NO WARRANTY, to the extent permitted by

applicable law.

root@salt-master:~# salt-key -L

Accepted Keys:

bgp-01

bgp-02

salt-master

Denied Keys:

Unaccepted Keys:

Rejected Keys:

root@salt-master:~# salt 'bgp-01' service.stop 'nginx'

bgp-01:

True

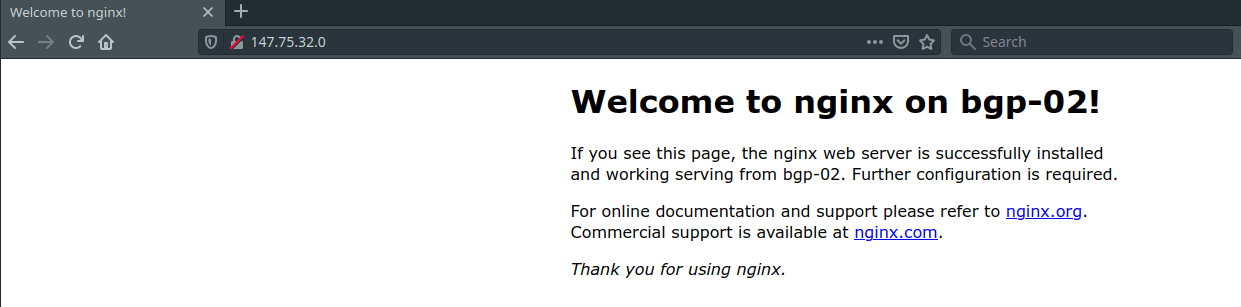

Do we see requests to the anycast IP go to bgp-02?

Yes we do! Success.

Bring the service back up

We will now bring the service back up on bgp-01, and see if traffic moves back over: -

root@salt-master:~# salt 'bgp-01' service.start 'nginx'

bgp-01:

True

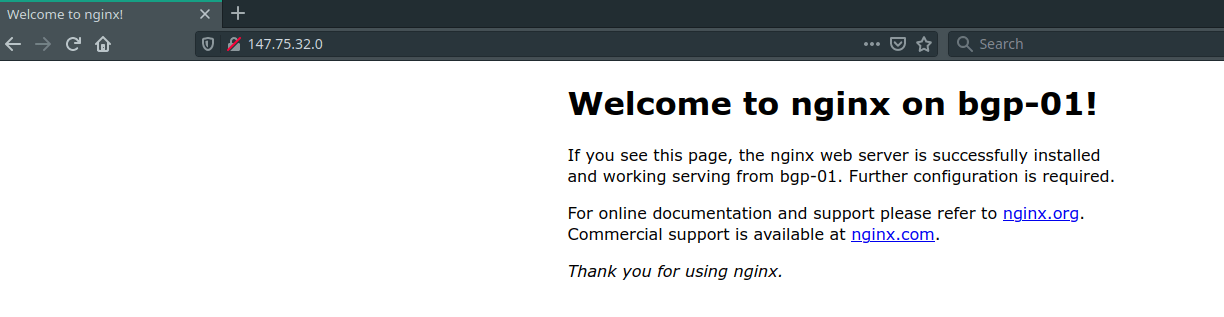

Does the anycast traffic go back to bgp-01?

Yes it does!

There is no guarantee that traffic should go back to bgp-01, instead being dependent upon the Equinix network topology as to whether bgp-01 or bgp-02 is a preferred path. In some cases, the paths may be evaluated as equal, at which point the oldest route is preferred. If we withdraw the route from bgp-01 only, bgp-02 will now have the oldest route, even when bgp-01 reannounces the route.

ExaBGP logs

Lets take a look at some of the ExaBGP log messages that show the routes being announced and withdrawn: -

May 14 14:53:59 bgp-01 exabgp[15320]: 14:53:59 | 15320 | api | route added to neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

May 14 14:54:04 bgp-01 exabgp[15320]: 14:54:04 | 15320 | api | route added to neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

May 14 14:54:09 bgp-01 exabgp[15320]: 14:54:09 | 15320 | api | route removed from neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

May 14 14:54:14 bgp-01 exabgp[15320]: 14:54:14 | 15320 | api | route removed from neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

May 14 14:54:19 bgp-01 exabgp[15320]: 14:54:19 | 15320 | api | route removed from neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

May 14 14:54:24 bgp-01 exabgp[15320]: 14:54:24 | 15320 | api | route added to neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

May 14 14:54:29 bgp-01 exabgp[15320]: 14:54:29 | 15320 | api | route added to neighbor 169.254.255.1 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open, neighbor 169.254.255.2 local-ip 10.12.60.3 local-as 65000 peer-as 65530 router-id 10.12.60.3 family-allowed in-open : 147.75.32.0/32 next-hop 10.12.60.3

We can see that at 14:54:09, the nginx-check script failed to reach nginx on bgp-01, and told ExaBGP to withdraw the anycast route. ExaBGP then generated a BGP withdrawal for the anycast route to both Equinix peers.

15 seconds later, we started nginx again, and we stared advertising the route again.

Summary

Conditional route advertisement with ExaBGP is exceedingly powerful, and allows us to build highly available services without necessarily requiring clustering protocols (e.g. corosync) or something like VRRP (which relies on Layer 2 connectivity between peers).

Being able to use Pulumi and Saltstack to configure this as well means that you can quite easily scale up how many anycast nodes you required. Change the BGP node creation to be inside of a for loop, and you can create as many nodes as you have money to pay for!

I want to thank David McKay/Rawkode again, in part for the inspiration (through the Tinkerbell testing infrastructure) and planting some seeds in my brain with the Metal Monday and Rawkode Live series.